The winning model combines AI power with human judgment.

Human-in-the-Loop Legal AI: Why Full Automation Is a Myth (and Investors Should Be Glad)

Introduction: The Automation Fantasy vs. Reality

The pitch was seductive: artificial intelligence would automate legal work entirely, eliminating the need for expensive attorneys while delivering faster, cheaper, more consistent legal services. Fully autonomous legal AI would review contracts without human oversight, conduct research without attorney verification, and provide legal advice without professional involvement. Lawyers would become obsolete — or at least dramatically marginalized — as legal AI automation handled everything from document review to litigation strategy. The autonomous AI legal services future seemed inevitable.

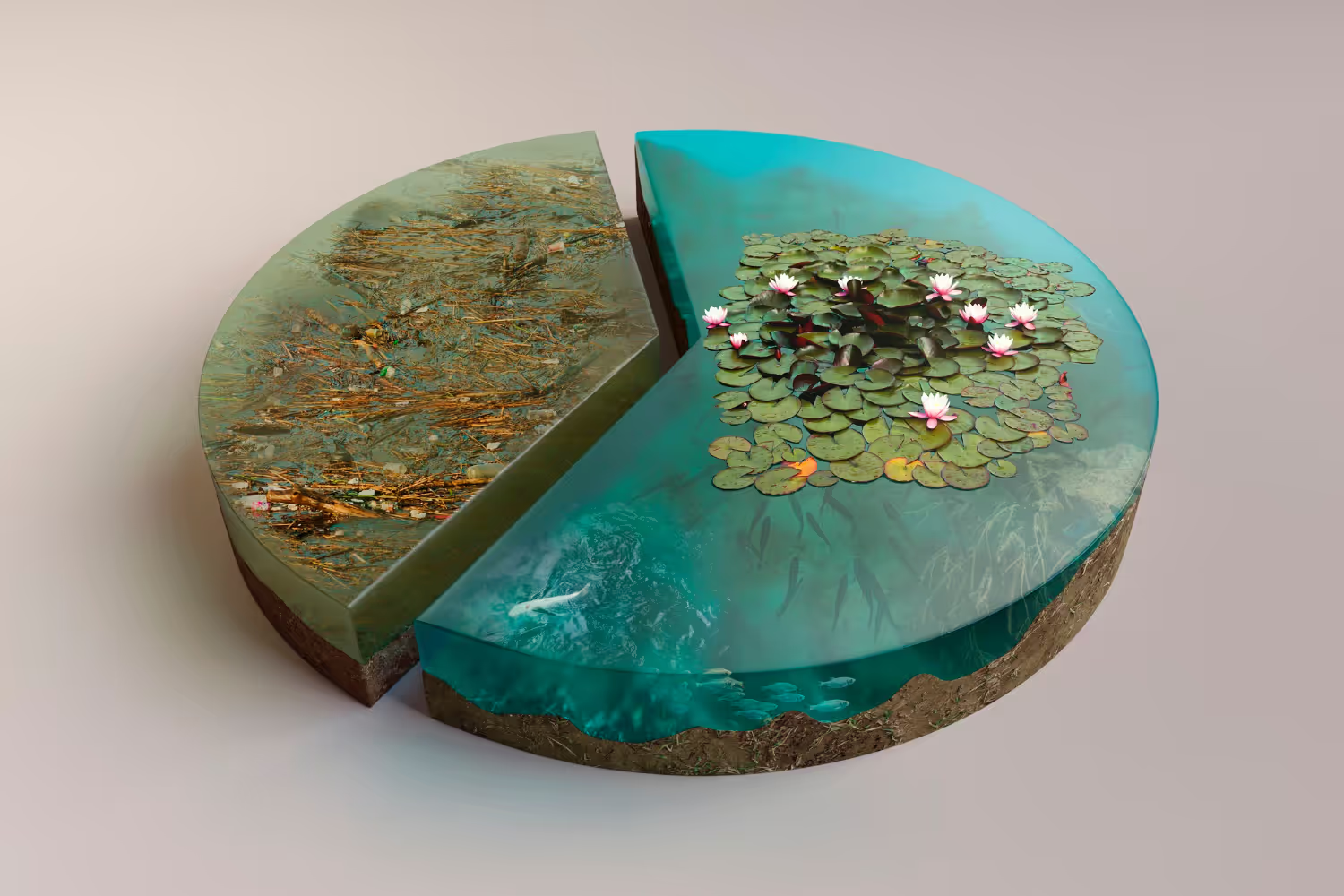

That vision has collided with reality. The legal AI automation fantasy has given way to understanding that human-in-the-loop AI legal represents the practical, profitable, and sustainable approach to legal technology. The companies building successful legal AI software have embraced hybrid AI legal tech that augment human capabilities rather than attempting to replace human judgment entirely. The fully autonomous approach has failed not because the technology isn't impressive, but because AI in legal tech requires qualities that machines cannot reliably provide alone.

The shift toward human-in-the-loop legal tech reflects hard-learned lessons about AI limitations in law, regulatory constraints, liability exposure, and market reality. Legal AI systems that promised full automation have encountered accuracy limitations that make unsupervised operation dangerous. Regulatory frameworks designed around professional responsibility cannot accommodate autonomous AI legal practice. Liability exposure from AI errors without human oversight creates unacceptable risk. And customers — the lawyers, corporations, and individuals who consume legal services — don't actually want fully autonomous AI; they want AI-assisted legal services that make human professionals more effective.

For legal tech investors, this reality should provide comfort rather than disappointment. The hybrid legal AI model creates more defensible businesses with better unit economics than the fully autonomous approaches that captured early attention. Companies that tried to eliminate humans from legal processes face margin compression, liability exposure, and regulatory barriers. Companies that positioned AI as human augmentation legal tech have built sustainable legal AI business models with pricing power and competitive moats.

The human-in-the-loop vs autonomous AI debate in legal technology has been resolved by market reality. The winning approach combines AI power with human judgment, creating value that neither humans nor AI could create alone. Understanding why legal AI cannot be fully automated — and why hybrid models win — is essential for anyone investing in, building, or deploying AI powered legal software.

"The fully autonomous legal AI companies burned through hundreds of millions chasing a future that regulatory and market reality won't permit," observes a veteran legal tech investment AI specialist. "The human-in-the-loop companies built real businesses. The irony is that the 'less ambitious' approach created more value."

— Emily Radford

This analysis examines why full legal automation remains a myth, how human-AI collaboration legal models win, and what the hybrid approach means for legal AI investment strategy.

Why Full Legal Automation Fails

The autonomous legal AI vision fails for fundamental reasons that technology advancement alone cannot overcome. Understanding these barriers illuminates why human-in-the-loop AI represents the winning approach.

The Accuracy Problem

Legal AI accuracy limitations make fully autonomous operation dangerous. Even the most advanced large language models exhibit error rates that are acceptable for human-assisted workflows but unacceptable for unsupervised operation. The AI accuracy legal applications challenge is fundamental, not temporary:

Hallucination risk — AI systems generate plausible-sounding but factually incorrect information. In legal contexts, hallucinated case citations, invented statutory provisions, or fabricated contract terms create serious consequences. According to research on AI hallucination, these errors occur even in state-of-the-art systems and cannot be fully eliminated through training. The legal AI hallucination problem has caused high-profile embarrassments and liability exposure.

Edge case failures — AI systems trained on common patterns struggle with unusual situations that legal work frequently presents. The rare but important cases — novel fact patterns, unusual contract provisions, jurisdictional variations — often receive the worst AI treatment. AI edge cases legal represent exactly the situations where errors cause greatest harm.

Context limitations — AI systems cannot fully understand the context that shapes legal analysis: the client's business objectives, the relationship history between parties, the strategic considerations that inform legal advice. Missing context produces technically correct but practically wrong outputs. The legal AI context understanding gap cannot be closed through better training alone.

Confidence miscalibration — AI systems often express high confidence in incorrect answers and low confidence in correct ones. This miscalibration makes human oversight essential; without it, users cannot distinguish reliable outputs from unreliable ones. The AI confidence legal challenge requires human judgment to interpret AI certainty claims.

Training data limitations — AI models trained on historical legal documents may not reflect current law, recent precedents, or emerging legal theories. The legal AI training data constraints mean AI knowledge lags legal reality.

The legal AI limitations around accuracy don't mean AI isn't useful — they mean AI requires human oversight to catch errors before they cause harm. AI-assisted legal work with human verification delivers AI benefits while managing accuracy risks that autonomous operation cannot manage.

The Regulatory Barrier

Legal AI regulation creates fundamental barriers to fully autonomous operation. The legal profession operates under regulatory frameworks designed around professional responsibility that AI cannot satisfy:

Unauthorized practice of law — providing legal services without attorney involvement violates regulations in virtually every jurisdiction. According to unauthorized practice of law restrictions, legal advice and representation require licensed attorney involvement. Fully autonomous AI cannot satisfy this requirement.

Professional responsibility — attorneys have ethical obligations including competence, diligence, and supervision that cannot transfer to AI systems. The attorney remains responsible for work product regardless of AI involvement.

Malpractice liability — attorneys carry malpractice insurance that covers errors in professional judgment. AI systems cannot be insured as professionals, leaving liability gaps that autonomous operation cannot bridge.

Confidentiality obligations — attorney-client privilege and confidentiality rules create obligations around information handling that autonomous AI systems may not satisfy.

The legal AI compliance challenges aren't temporary obstacles awaiting regulatory evolution — they reflect fundamental principles about professional accountability that regulators show no inclination to abandon. Human oversight AI legalisn't just good practice; it's legally required for most legal services.

The Liability Exposure

Legal AI liability creates risk profiles that make fully autonomous operation untenable for most applications:

Error consequences — legal errors can cause catastrophic harm. Missed deadlines eliminate claims. Contract mistakes create binding obligations. Incorrect advice exposes clients to liability. The consequence severity makes AI error rates that might be acceptable elsewhere unacceptable in legal.

Attribution challenges — when AI makes errors, liability attribution becomes complex. Is the AI vendor responsible? The deploying organization? The supervising attorney? Autonomous operation without clear human responsibility creates liability ambiguity that increases risk for all parties.

Insurance gaps — professional liability insurance covers attorney errors but may not cover AI errors, particularly in autonomous operation. Organizations deploying autonomous legal AI may face uninsured exposure.

Client expectations — clients expect human professional accountability for legal services. Autonomous AI that eliminates professional responsibility violates client expectations and may breach engagement terms.

The legal AI risk management reality requires human involvement to maintain professional accountability, insurance coverage, and client confidence. Human-supervised legal AI manages liability in ways autonomous operation cannot.

The Quality Ceiling

Legal AI quality encounters a ceiling that human involvement can exceed:

Judgment requirements — legal work frequently requires judgment calls that AI cannot make: assessing witness credibility, predicting opposing counsel behavior, weighing strategic alternatives. These judgment elements often determine outcome more than the analytical tasks AI excels at.

Creativity needs — effective legal work often requires creative approaches: novel arguments, unconventional strategies, inventive solutions. AI systems trained on existing patterns struggle to generate truly creative approaches.

Relationship factors — legal outcomes often depend on relationships: with judges, opposing counsel, clients, regulators. AI cannot build or leverage relationships that human professionals develop.

Strategic integration — legal work must integrate with business strategy, and AI systems cannot understand business context that shapes legal priorities. Human judgment connects legal analysis to business reality.

The AI legal services quality ceiling means fully autonomous operation delivers inferior outcomes compared to human-AI collaboration. Human-in-the-loop legal AI produces better results while managing risks that autonomous operation creates.

The Human-in-the-Loop Advantage

Human-in-the-loop AI legal models deliver advantages that fully autonomous approaches cannot match. Understanding these advantages explains why hybrid models win.

Error Correction and Quality Assurance

Human AI collaboration legal enables error correction that eliminates AI's most dangerous outputs:

Hallucination catching — human reviewers identify and correct AI hallucinations before they cause harm. The human-in-the-loop serves as quality filter that catches errors AI cannot self-detect.

Judgment application — humans apply judgment to AI outputs, distinguishing technically correct answers from contextually appropriate ones. This judgment layer elevates quality above what AI alone achieves.

Edge case handling — humans recognize unusual situations that require special handling, preventing AI from applying standard patterns to non-standard cases.

Confidence calibration — humans assess AI confidence appropriately, treating high-confidence errors with appropriate skepticism and low-confidence correct answers with appropriate trust.

The AI-augmented legal services model treats AI as powerful tool requiring human oversight rather than autonomous agent requiring no supervision. This framing produces better outcomes while managing risk.

Regulatory Compliance

Human-in-the-loop legal tech satisfies regulatory requirements that autonomous AI cannot:

Professional involvement — human attorney involvement satisfies unauthorized practice restrictions. The attorney supervises AI outputs and takes professional responsibility for deliverables.

Ethical compliance — human oversight enables compliance with professional responsibility obligations including competence, diligence, and supervision.

Liability management — human involvement maintains professional liability coverage and creates clear accountability for errors.

Privilege protection — attorney involvement protects confidentiality and privilege in ways autonomous AI operation may not.

The legal AI compliance achievable through human-in-the-loop models enables deployment in contexts where autonomous AI faces insurmountable regulatory barriers.

Customer Preference

Market reality favors human-AI collaboration over full automation:

Trust requirements — legal services involve high-stakes decisions where clients want human accountability. Trust in AI alone remains insufficient for most legal contexts.

Relationship value — clients value relationships with human professionals who understand their businesses, histories, and preferences. AI cannot replicate relationship value that human professionals provide.

Communication needs — legal matters require communication with humans: explaining options, discussing strategy, providing reassurance. AI cannot fully substitute for human communication in high-stakes contexts.

Outcome responsibility — when legal matters go wrong, clients want human professionals who bear responsibility and can explain what happened. Autonomous AI cannot provide this accountability.

The legal tech market reality is that customers don't want full automation — they want augmentation that makes human professionals more effective while maintaining the human relationship, accountability, and judgment they value.

Table 1: Full Automation vs. Human-in-the-Loop Comparison

| Factor | Full Automation Approach | Human-in-the-Loop Approach |

| Accuracy | Unmanaged error rates, hallucination risk | Human verification catches errors |

| Regulatory | Violates UPL in most jurisdictions | Satisfies professional requirements |

| Liability | Unclear attribution, insurance gaps | Professional accountability maintained |

| Quality Ceiling | Limited by AI capabilities | Human judgment elevates quality |

| Customer Acceptance | Low trust, relationship absence | Trust maintained, relationships preserved |

| Business Model | Margin pressure, commodity risk | Value-based pricing, differentiation |

Author: Emily Radford;

Source: esmife.com

How Hybrid Models Create Value

The hybrid AI legal services model creates value through mechanisms that fully autonomous approaches cannot replicate. Understanding value creation explains investor preference for legal AI human in the loop companies.

Productivity Multiplication

AI productivity law firms models multiply human capability rather than replacing it:

Speed amplification — AI handles time-consuming tasks (document review AI, research synthesis, initial drafting) faster than humans, enabling attorneys to complete more work in less time. AI document review software can process thousands of documents in hours rather than weeks.

Capacity expansion — attorneys using AI tools for in-house legal teams can handle more matters simultaneously, increasing effective capacity without proportional headcount increases. Enterprise legal automation enables scale without proportional headcount growth.

Quality improvement — AI assistance improves work product quality by catching errors, ensuring completeness, and providing consistency. AI contract analysis software identifies issues humans might miss while humans catch AI errors.

Focus elevation — AI handles routine tasks, enabling attorneys to focus on high-value activities requiring human judgment, creativity, and relationships. Legal workflow automation redirects attorney effort to highest-value activities.

The legal workflow automation through human-in-the-loop models improves attorney productivity by 30-50% or more in applicable task categories — creating value for law firms, legal departments, and their clients. The scalable legal AIapproach scales through human leverage rather than human replacement.

Pricing Power and Margins

Human-in-the-loop AI business models maintain pricing power that full automation destroys:

Value-based pricing — augmentation models can price based on value delivered rather than competing on cost. AI makes human professionals more valuable, supporting premium pricing for AI-assisted legal services.

Differentiation sustainability — human involvement creates differentiation that pure AI cannot match. Different firms using the same legal AI software deliver different outcomes based on human judgment quality.

Margin protection — augmentation models protect professional margins rather than commoditizing services. AI powered legal software improves efficiency while humans capture efficiency benefits through margin improvement.

Upsell opportunity — human-in-the-loop creates opportunities for professional services around AI deployment, training, and optimization that pure legal automation software products lack.

The legal AI business model economics favor hybrid approaches. According to professional services economics, human involvement maintains the leverage and margin structures that make professional services businesses attractive.

Defensibility and Moats

Human-in-the-loop legal AI creates competitive moats that autonomous AI lacks:

Workflow integration — hybrid models integrate into human workflows, creating switching costs and stickiness that standalone AI tools lack.

Training feedback — human corrections improve AI models over time, creating data advantages that compound with usage.

Relationship stickiness — human relationships built around AI-augmented services create retention that pure AI services cannot match.

Implementation depth — human-in-the-loop requires deeper implementation and customization, creating switching costs and competitive protection.

The legal AI competitive advantage from hybrid models proves more sustainable than advantages from AI capability alone, which competitors can replicate or exceed.

Risk-Adjusted Returns

Human-in-the-loop approaches deliver superior risk-adjusted returns:

Liability management — human oversight manages liability exposure that autonomous operation creates, reducing risk profile.

Regulatory compliance — hybrid models satisfy regulatory requirements, eliminating regulatory risk that autonomous approaches face.

Market timing — hybrid models generate value today rather than depending on regulatory evolution or technology advancement that may or may not occur.

Customer acceptance — hybrid models match customer preferences, reducing market adoption risk.

The legal AI investment risk profile for human-in-the-loop companies proves substantially better than for autonomous approaches, making equivalent returns more attractive and superior returns more achievable.

The Failure Pattern of Full Automation

Examining companies that pursued fully autonomous legal AI reveals common failure patterns that validate human-in-the-loop superiority. The limits of legal AI automation become clear through these case studies.

Case Pattern: Document Automation

Companies that attempted fully autonomous document automation — generating legal documents without attorney review — encountered predictable problems:

Quality failures — documents contained errors that caused client harm, generating liability and reputational damage. The AI document review software without human oversight produced unacceptable error rates.

Regulatory action — bar associations investigated and sometimes acted against unauthorized practice violations. Compliant legal AI requires human professional involvement.

Customer rejection — after initial enthusiasm, customers recognized quality limitations and demanded human review. Legal tech adoption law firms stalled when quality issues emerged.

Margin collapse — without differentiation, autonomous document generators faced commodity pricing and margin pressure

The survivors pivoted to AI-assisted legal work with attorney review, acknowledging that human oversight AI legal is necessary for quality, compliance, and market acceptance.

Case Pattern: Legal Research

Companies that attempted fully autonomous legal research — providing research results without attorney verification — faced similar challenges:

Hallucination incidents — high-profile cases of AI citing non-existent precedents damaged credibility and created liability. The legal judgment AI limitations became publicly visible.

Quality inconsistency — research quality varied unpredictably, making autonomous results unreliable for important matters. The AI risk in legal decisions proved unacceptable.

Value compression — without professional service wrapper, research became commodity with pricing pressure

Trust deficit — attorneys wouldn't rely on unverified research for significant matters, limiting use cases

The successful legal research companies adopted AI-augmented research models where AI accelerates research while attorneys verify and synthesize results. Responsible AI legal tech requires verification loops.

Case Pattern: Contract Review

Companies pursuing fully autonomous AI contract review encountered structural limitations:

Context blindness — AI couldn't understand business context that shapes contract review priorities. Contract lifecycle management AI requires human strategic input.

Judgment absence — AI identified issues but couldn't assess significance or recommend responses without human judgment. AI due diligence legal workflows require human prioritization.

Relationship irrelevance — contract review often involves relationship considerations AI cannot factor

Client expectations — clients expected human professional accountability for review conclusions

The winning AI contract analysis software companies positioned as augmentation tools that help attorneys review faster rather than autonomous systems that replace attorney review. AI legal operations works best when humans remain in control.

What Investors Should Look For

The legal AI investment landscape rewards human-in-the-loop approaches. Understanding what distinguishes strong hybrid AI legal tech models from weak ones guides investment selection.

Model Architecture

Strong human-in-the-loop AI models exhibit specific architectural characteristics:

Clear human integration points — well-defined stages where humans review, approve, or augment AI outputs. AI assisted vs automated legal work distinction should be architecturally enforced.

Confidence scoring — AI that expresses confidence levels enabling humans to focus attention on uncertain outputs. AI auditability legal requirements demand transparency about AI certainty.

Feedback mechanisms — systems that learn from human corrections, improving over time. Enterprise legal AI platforms should incorporate continuous learning.

Audit trails — documentation of human-AI interaction that supports compliance and quality assurance. AI governance legal requirements demand traceability.

Workflow fit — integration into existing human workflows rather than requiring workflow transformation. Legal operations software AI should enhance rather than disrupt existing processes.

Market Positioning

Strong legal AI companies position appropriately:

Augmentation messaging — positioning as tools that make professionals more effective rather than replacements for professionals. AI assisted legal services framing resonates with buyers.

Professional partnership — partnering with law firms and legal departments rather than competing against them. Ethical AI in legal tech requires respect for professional roles.

Compliance emphasis — demonstrating understanding of regulatory requirements and how products satisfy them. AI risk management legal capabilities should be prominent.

Outcome focus — emphasizing outcomes (faster, better, cheaper) rather than automation as end in itself. Legal AI ROImessaging speaks to buyer priorities.

Unit Economics

Strong sustainable legal AI business models show characteristic unit economics:

Professional services margin — maintaining professional services margin structures rather than pure software economics

Implementation revenue — generating revenue from implementation and customization that creates switching costs

Success-based alignment — pricing models that align vendor success with customer outcomes. Scalable legal AI should scale value, not just volume.

Retention strength — high retention reflecting genuine value delivery rather than lock-in

Competitive Dynamics

Strong positions in legal AI market competition exhibit:

Workflow integration depth — deep integration that creates competitive moats

Data advantages — proprietary data or training advantages from customer usage

Professional relationships — relationships with legal professionals that pure technology companies lack

Regulatory relationships — engagement with bar associations and regulators that supports market positioning

Table 2: Legal AI Investment Evaluation Framework

| Criterion | Strong Human-in-the-Loop | Weak or Autonomous |

| Positioning | Augmentation, professional partnership | Replacement, disruption narrative |

| Architecture | Clear human integration, confidence scoring | Autonomous operation, no human checkpoints |

| Compliance | Regulatory awareness, UPL compliance | Regulatory risk, unclear compliance |

| Economics | Professional margin, implementation revenue | Software-only margins, commodity pricing |

| Customers | Law firms, legal departments as partners | Law firms, legal departments as targets |

| Defensibility | Workflow integration, data advantages | AI capability alone |

Author: Emily Radford;

Source: esmife.com

The Sustainable Future of Legal AI

The future of legal AI belongs to hybrid models that optimize human-AI collaboration rather than pursuing unachievable full automation. Understanding where the market is heading helps investors and companies position appropriately.

Technology Evolution Within Hybrid Framework

AI capabilities will continue advancing, but advancement will occur within human-in-the-loop frameworks:

Better augmentation — AI will handle more tasks and handle them better, but humans will retain oversight roles. The AI-powered legal tools of the future will be more capable but still human-supervised.

Smarter confidence — AI will better identify when human review is essential versus when AI confidence is warranted. Legal AI confidence scoring will improve, enabling more efficient human-AI workflows.

Deeper integration — AI will integrate more deeply into human workflows, becoming more seamless while remaining supervised. The legal workflow AI integration will feel natural rather than intrusive.

Expanded scope — AI will address more legal task categories while maintaining human oversight architecture. Categories currently requiring intensive human effort will become AI-assisted legal tasks with efficiency gains.

Improved feedback loops — systems will better learn from human corrections, improving faster with usage. The AI legal training from human feedback will accelerate model improvement.

The legal AI development trajectory improves augmentation rather than achieving replacement. Each generation of AI will be more useful to human professionals without eliminating the need for human involvement.

Regulatory Evolution

Regulatory frameworks will evolve but will not abandon professional responsibility:

Standards development — regulators will develop standards for appropriate AI use in legal services. Legal AI ethicsframeworks will emerge that define acceptable human-AI collaboration patterns.

Competence requirements — attorney competence obligations will expand to include AI supervision skills. Understanding AI limitations legal will become professional competency.

Disclosure expectations — transparency about AI use in legal services may become required. Clients will expect disclosure of AI-assisted legal work in engagement letters and deliverables.

Quality standards — standards for human oversight of legal AI may emerge. Legal AI quality assurance requirements will define minimum human review expectations.

Liability frameworks — clearer frameworks for AI-related liability in legal services will develop. Legal AI liabilityallocation between attorneys, firms, and vendors will become more defined.

The legal AI regulation evolution will define appropriate human-AI collaboration rather than permitting autonomous operation. Regulators will embrace AI's benefits while maintaining professional accountability.

Market Maturation

The legal tech market will mature around hybrid models:

Best practices emergence — industry best practices for human-in-the-loop legal AI will develop and spread. Leading firms will publish guidance on effective AI-augmented legal services delivery.

Quality differentiation — firms will differentiate on human-AI collaboration quality rather than AI capability alone. The human component becomes the differentiator when AI capabilities commoditize.

Pricing standardization — pricing models for AI-augmented legal services will standardize. The market will develop shared expectations for how AI efficiency translates to client value.

Talent evolution — legal talent will develop AI collaboration skills as professional competency. Law schools will teach legal AI usage, and firms will train attorneys in effective AI supervision.

Vendor consolidation — the legal AI vendor landscape will consolidate around winning hybrid approaches. Autonomous approach vendors will either pivot or fail.

Investor Implications

The legal AI investment thesis for mature market remains human-in-the-loop focused:

Sustainable returns — hybrid models generate sustainable returns rather than boom-bust cycles. The legal AI business model economics favor steady growth over speculative moonshots.

Consolidation opportunity — market maturation creates consolidation opportunities for leading hybrid players. Legal AI M&A activity will favor human-in-the-loop leaders.

Margin stability — professional service margins remain stable rather than collapsing toward software commodity. AI-augmented legal services maintain value-based pricing.

Exit optionality — strategic acquirers prefer sustainable hybrid businesses to autonomous approaches with regulatory risk. Legal AI exit valuations will reflect risk-adjusted sustainability. The legal AI acquisition market favors companies with defensible, compliant business models.

Long-term positioning — hybrid model investments position for long-term market evolution rather than betting on regulatory change that may not occur. The legal AI market trends favor sustainable augmentation over speculative automation.

Frequently Asked Questions (FAQ)

1. Why can't AI fully automate legal work?

Legal AI automation faces fundamental barriers that technology advancement alone cannot overcome: AI limitations in law including hallucination risk make unsupervised operation dangerous; regulatory frameworks requiring attorney involvement for legal services prohibit fully autonomous legal AI in most contexts; liability exposure from AI errors without human oversight AI legal creates unacceptable risk; and legal work requires judgment, creativity, and relationship factors that AI cannot replicate. These barriers reflect the fundamental limits of legal AI automation and professional regulation that human-in-the-loop AI legal addresses while full automation cannot.

2. What is human-in-the-loop legal AI and how does it work?

Human-in-the-loop legal AI combines AI capabilities with human oversight at defined integration points. Legal AI software handles tasks like document review AI, research synthesis, and initial drafting — providing speed and consistency advantages. Humans review AI outputs, catching errors, applying judgment, and taking professional responsibility for deliverables. The AI-assisted legal services model multiplies human productivity while maintaining quality, compliance, and accountability that autonomous operation cannot provide. Enterprise legal AI platformsimplement this architecture at scale.

3. Why should investors prefer human-in-the-loop legal AI companies?

Legal tech investment AI in human-in-the-loop companies offers superior risk-adjusted returns: hybrid AI legal techsatisfies regulatory requirements that autonomous approaches violate; they manage liability exposure through professional accountability; they match customer preferences for human involvement in high-stakes legal matters; and they create defensible businesses with legal AI ROI rather than commodity AI services. The sustainable legal AI business modelsgenerate margins, strong retention, and competitive moats that autonomous approaches cannot match.

4. Will AI ever fully automate legal work?

Full legal automation remains unlikely for most legal services because the legal judgment AI limitations are fundamental. Even as AI capabilities advance, barriers persist: legal work involves judgment that AI cannot replicate; professional regulation requires human attorney involvement; liability frameworks assume human accountability; and clients prefer human professional relationships for high-stakes matters. Legal AI development will continue improving AI-assisted vs automated legal work — AI will handle more tasks and handle them better — but human oversight will remain essential. Responsible AI legal tech embraces augmentation, not replacement.

5. How should legal AI companies position themselves for success?

Successful legal AI companies position as augmentation partners: messaging emphasizes AI productivity law firmsgains rather than job elimination; products like AI contract analysis software integrate into human workflows with clear review points; compliant legal AI demonstrations show regulatory awareness; sustainable legal AI business modelsmaintain professional service margins; and customer relationships treat law firms and legal departments as partners. Ethical AI in legal tech helps legal professionals succeed rather than threatening their existence. The market rewards AI tools for in-house legal teams that enhance rather than replace professional judgment.

Conclusion: The Wisdom of Hybrid Models

The human-in-the-loop legal AI victory over fully autonomous approaches represents not technology limitation but market wisdom. The hybrid model wins because it correctly identifies where AI creates value in legal work — and correctly acknowledges where human involvement remains essential.

AI creates value in legal work by accelerating tasks humans can do but do slowly, by ensuring consistency humans achieve imperfectly, and by enabling scale humans cannot achieve alone. AI-augmented legal services capture this value while maintaining the quality, compliance, and accountability that legal services require. The legal AI productivity gains are real and substantial — attorneys using AI effectively accomplish more, faster, and often better than attorneys working without AI assistance.

But AI cannot replace the judgment that distinguishes good legal work from adequate legal work. It cannot satisfy the professional responsibility obligations that define legal practice. It cannot bear the liability that legal services require someone to bear. And it cannot provide the human accountability and relationship that clients want from their legal service providers. Human oversight AI legal isn't a compromise or limitation — it's recognition of where value actually lives in the legal AI value chain.

The hybrid AI legal services approach represents mature understanding of technology's role in professional services. Technology augments human capability without replacing human judgment. It handles the routine while humans handle the exceptional. It provides scale while humans provide accountability. This division of labor between human and machine optimizes outcomes in ways that neither pure human effort nor pure AI automation could achieve.

For legal tech investors, the human-in-the-loop thesis provides clarity in a market often clouded by AI hype. The sustainable legal AI businesses are those that augment rather than replace, that partner rather than disrupt, that satisfy rather than challenge regulatory requirements. These businesses may seem less ambitious than the fully autonomous visions that captured early attention — but they generate returns while the fully autonomous companies generate regulatory challenges, liability exposure, and customer rejection.

The investment discipline required is resisting the appeal of revolutionary narratives in favor of evolutionary reality. Legal AI investment strategy should prioritize companies building sustainable human-AI collaboration over companies promising impossible human replacement. The returns from realistic approaches exceed the returns from fantastic ones — not because realistic is better, but because realistic actually works.

The legal AI market will continue evolving. AI capabilities will advance. New applications will emerge. But the fundamental architecture — AI as powerful tool supervised by human professionals — will persist because it correctly reflects where value and responsibility appropriately reside. The human-AI collaboration legal tech model is not a temporary state awaiting full automation; it is the permanent structure of how AI creates value in legal services.

The fully autonomous legal AI future isn't delayed — it was never coming. The human-in-the-loop present is the sustainable future, and investors who recognize this will be glad they did. The companies that embrace hybrid models will build lasting businesses. The investors who back them will generate returns. And the legal profession will benefit from AI that makes lawyers more effective rather than threatening to make lawyers obsolete.

Related Stories

Read more

Read more

The content on esmife.com is provided for general informational and educational purposes only. It is intended to present insights, trends, and examples related to investing in legal technology and should not be considered legal, financial, investment, or professional advice.

All information, materials, and references shared on this website are for general informational purposes only. Investment strategies, legal technologies, market conditions, and outcomes may vary based on individual circumstances and should be evaluated independently.

Esmife.com makes no representations or warranties regarding the accuracy, completeness, or reliability of the information provided and is not responsible for any errors or omissions, or for decisions made based on the content presented on this website.